|

The first Raspberry Pi I had, got my attention with all the potentials it had

The first thought I had is - why couldn't it host a database ? My old 386 with a 66Mhz

process could and ran that just fine with Slackware Linux. I believe the total hard

drive space on that computer was about 200Mb.

In a nutshell, the purpose of this project was to be able to evaluate the upcoming

Tableau server release for the Linux platform. That release will be announced at the 2016

Austin, Texas Tableau conference.

Well, for that to work, I need a database to pull

information from - and the database can not be a bottleneck for the Tableau server.

Tableau, by the way, in a nutshell, is an awesome data visualization actionable business

intelligence tool. That means, that with a few clicks Tableau can connect to a myriad

of databases and types and in a matter of minutes you start exploring your datbase

in graph mode, none the less. Instantly you can find outliers of the data, trends,

groupings and more. With a few clicks you can drill down to individual records to see

what the data is all about. The tool is awesome - and far beyond and superior to Excel

generated graphs. So, if you are happy with the dashboard you created - you can save

that to a Tableau server and from there on, your managers can interactively move around

the data and aswer their own BI questions in minutes - unlike in the old days - with

a requisition to the IT department !

Thus, the Raspberry Pi cluster was born. I generated about 2.75 million random

financial auto loan records and dealership information. The records go all the way

back to 20014-january-1st to current date - 2016-october-16.

I installed mySQL on each of the Raspberry Pis - which, from now on I will just

call "nodes" - with identical configuration and identical data. Of course the only difference on each

node configuration is the bind_address, since that is tied to the IP address of the node.

Plan "A" was to create Federated engine tables on the first node and query that hoping

that it will pull the necessary query results from each node. That did not happen since

I noticed that the results took an unusually long time. Further digging revealed that

the mySQL Federated engine table downloads the entire table content, then joins that result set

to the rest of the node1 dimension tables, even though that's not how I wrote up my SQLs.

This test was a complete failure since I already had identical

data on each nodes - I did not want to download the same data I already had, three more times.

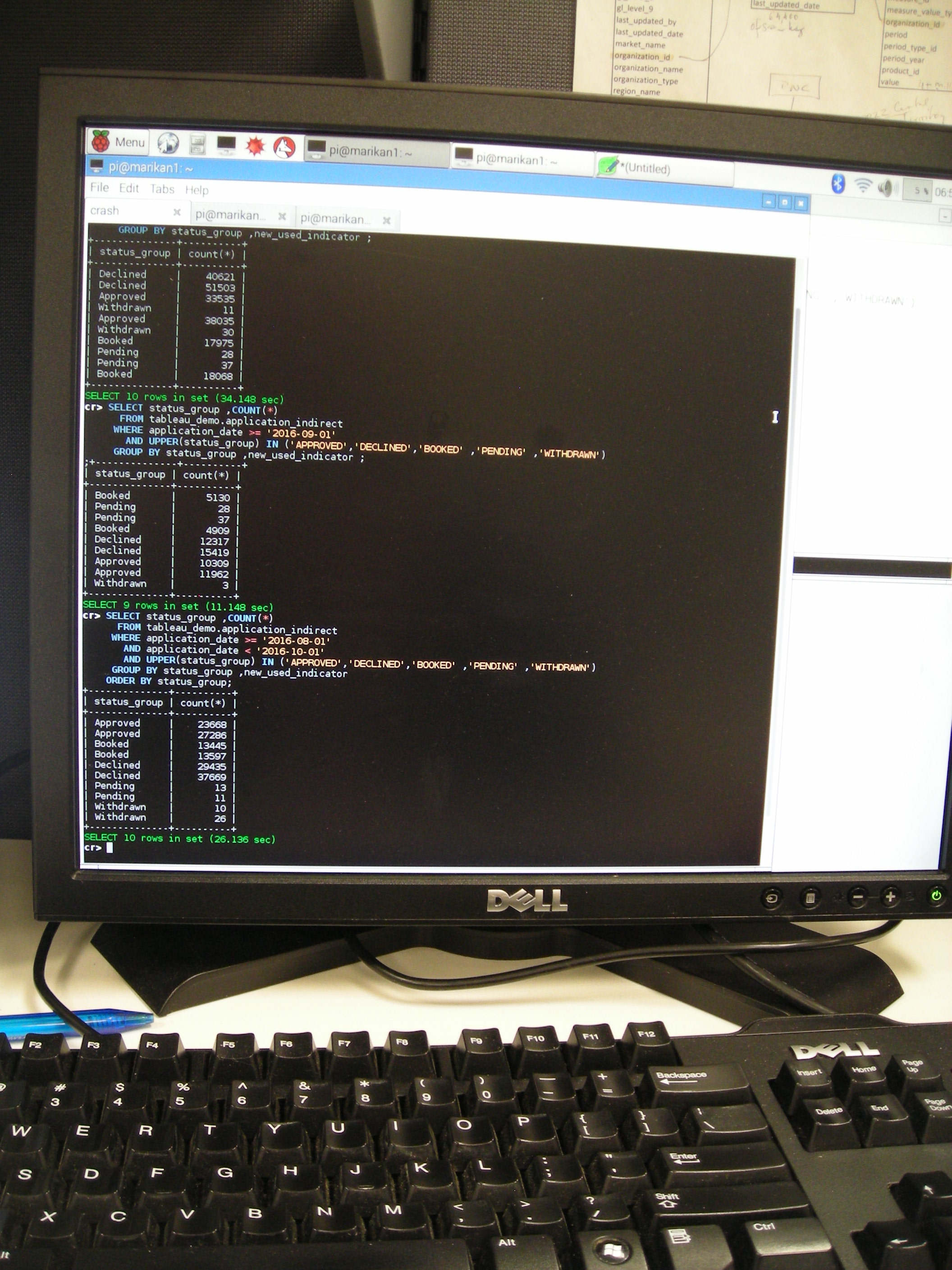

Plan "B". I wanted to pull 4 different month worth of data parallel processed and the queries

distributed to each node and wanted only the result of the query returned not the entire table content

So for example, node4 would return January data, node3 February, node2 March and node1

would process and return April data. So, I wrote the SQL for each node, then wrote a small

Perl script, threaded to send out the query to each node, process it then return the

data by inserting it into a Memory engine table on node1 and when all nodes inserted their

data, summarize the Memory engine table. This process was a very good success, the worst

time it ran is 19 seconds without any indexes on 2.7 million records. Once I created some

composite indexes the return time dropped to 3 seconds or less. Although this is a very

successful attempt to distributed queries and run them parallel simultaneously - unfortunately

Tableau can not connect to these result set memory tables, and start drilling down to

individual records because these tables are the result of a Perl script with predefined SQLs

Plan "C" Next I installed a nuber of clustered database technologies, replicating them on each of the nodes -

so each node is as close to the other as they can be - except when one node has to be a master.

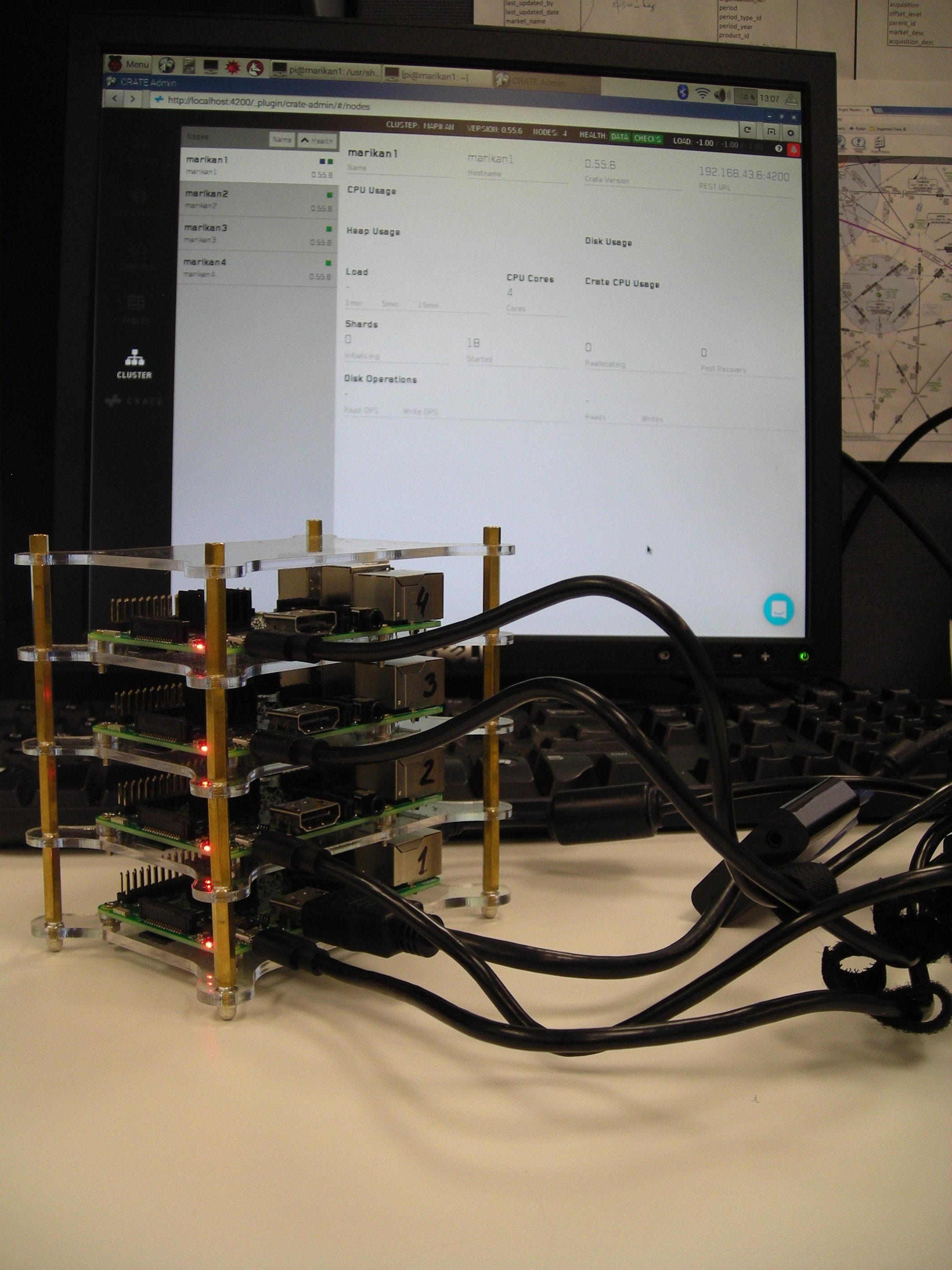

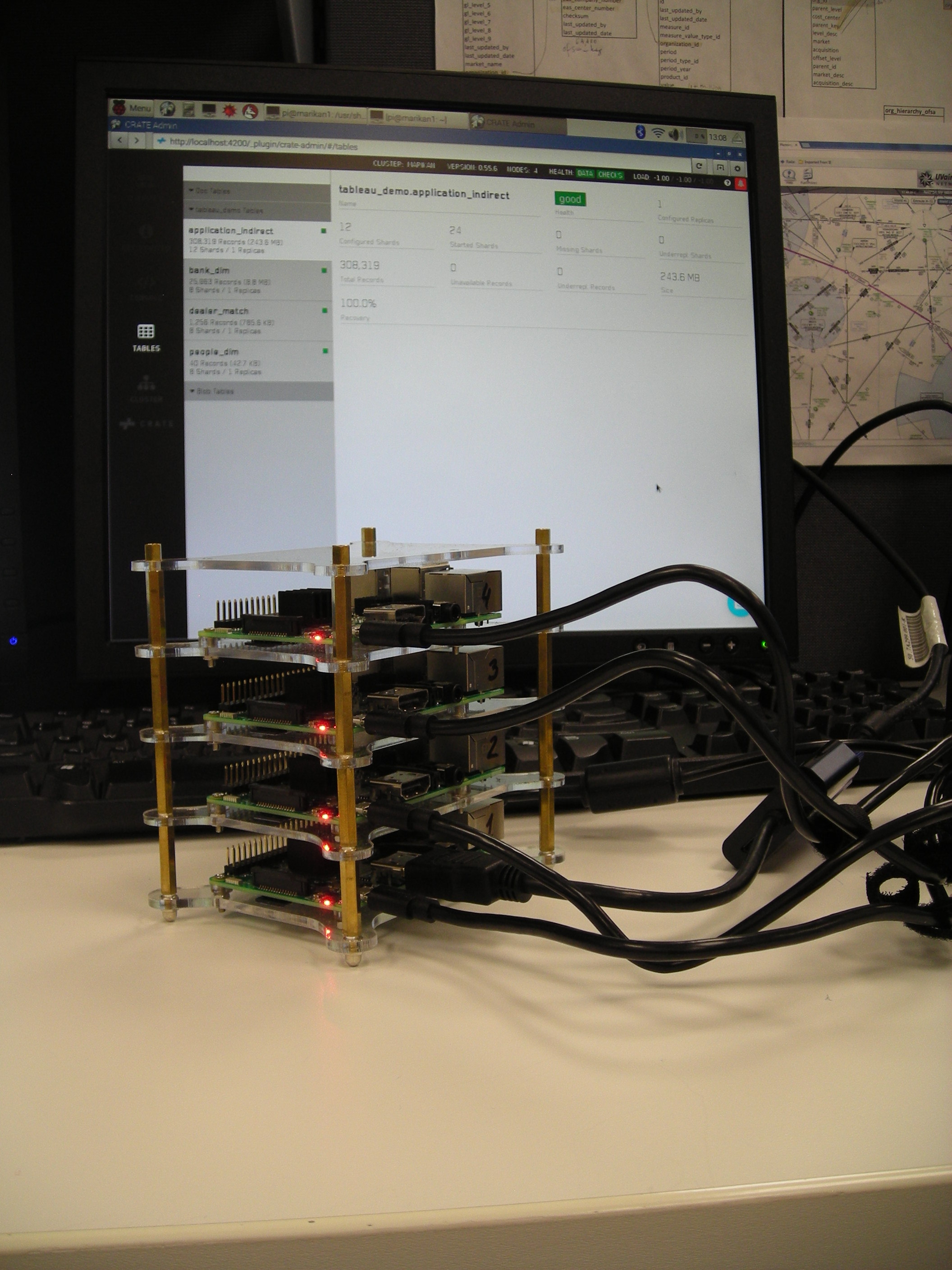

First I tried Crate database from http://crate.io. The set up went relatively easily and

aside from the data import slowness, it runs very nicely and so far is the best solution

I found for the Raspberry Pi cluster. The one difficulty I found with Crate is that loading

data from a JSON file of 300,000 records took 29 minutes. At this rate I could load only 3 months

worth of data, even though I disabled the refresh interval and the indices.store.throttle.type to 'none'.

Another aspect that I don't fully understand is why can't it use a CSV file, when the CSV file

converted JSON the size of the new file is three times that of the CSV data.

In any case Crate advertises this database as distributed queries,

which I believe is working really nicely, since the return time on the queries are

about 3 to 5 seconds. Remember these are very small low-end commodity hardware and were not

designed for clustered database servers. Still, the results are fast enough and hopefuly

Tableau can then connect to node1 and see the tables.

Plan "Z" I installed a number of database clustering technologies on my Raspberry Pi cluster.

I put HBase database on it, wich works quite well and I can see the nodes in the administrative

web page. However I could not figure out how to load data into HBase. Even though that is

a distributed database - apparently it has to run on Hadoop, and can not do anything on its own.

The learning curve on this is pretty steep. I installed Hadoop too, and the next step for HBase

but so far I ran into a myriad of issues - mainly that it has an issue with the Raspberry's ARM

processor. So perhaps, I have to compile Hadoop on the Pi first. I downloaded Phoenix which

is an SQL, DBMS type interface for HBase, but I won't be able to evaluate that until Hadoop

runs and finally I can load HBase.

I also installed CouchDB - which did not help at all. I had a hard time to understand how I can

load a JSON or CSV file to it to populate something. CouchDB is not a relational database so

for this project it is an inappropriate choice - however from the description on the site I

could not decipher this, at first.

I installed Gaian database, which I could never, ever start up, because of the myriad of errors.

Finally, I installed SQLite. The official Raspberry Pi version of SQLite is so old - it does not

recognize CSV files as a valid data mode for loading. So, I downloaded SQLite from an unofficial

mirror in source code and compiled the entire database on the Pi. The compile went just fine and

is now running. Wow !! What a surprise ! Yes, it is running ! Although SQLite is more

of an embedded database for phones and devices rather than anything else, it is blazing fast !

Who needs a distributed query database when this little server smokes on the Raspberry Pi - it is so fast. It returned the results of every query I threw at it in less than a single second !! Wow ! I could not

believe that this database returns 4 months of data tabulated, grouped and ordered out

of 2.75 million records in less than a second !! And I just created only a single

composite index on the entire data set. The dimension tables I left alone, did not even bothered

to index them. If I can figure out how to run this as a daemon on Linux so Tableau could

connect to it - it would be beautiful - even on a single node !

Conclusion: There are a myriad of clustered, distributed, massively parallel processed, noSQL,

newSQL and whatever else they are named databases and/or file systems out there. I had only

a very short time to evaluate some of them. The limitations of the hardware revealed quite

a few lessons. Nevertheless, I think that the Raspberry Pi is a seriously underestimated

piece of technology. I had a ton of fun working on this project and will keep hacking the

Pis even longer. Over all, the Raspberry Pis are awesome for pilot testing, debugging

performance bottlenecks, but most of all - it is a portable micro-data warehouse - which is

awesome ! The best part though, you can show off Crate on the Pi stack and show that you have

a distributed query database cluster - a supercomputer in the palm of your hand !

|

Raspberry Pi on Crate cluster - 4 nodes

Raspberry Pi on Crate cluster database tables

Raspberry Pi on Crate cluster - SQLs

Raspberry Pi cluster parallel pull from 4 mySQL databases with a Perl script

|